Google Research, 2022 & beyond: Robotics

February 14, 2023

Posted by Kendra Byrne, Senior Product Manager, and Jie Tan, Staff Research Scientist, Robotics at Google

Within our lifetimes, we will see robotic technologies that can help with everyday activities, enhancing human productivity and quality of life. Before robotics can be broadly useful in helping with practical day-to-day tasks in people-centered spaces — spaces designed for people, not machines — they need to be able to safely & competently provide assistance to people.

In 2022, we focused on challenges that come with enabling robots to be more helpful to people: 1) allowing robots and humans to communicate more efficiently and naturally; 2) enabling robots to understand and apply common sense knowledge in real-world situations; and 3) scaling the number of low-level skills robots need to effectively perform tasks in unstructured environments.

An undercurrent this past year has been the exploration of how large, generalist models, like PaLM, can work alongside other approaches to surface capabilities allowing robots to learn from a breadth of human knowledge and allowing people to engage with robots more naturally. As we do this, we’re transforming robot learning into a scalable data problem so that we can scale learning of generalized low-level skills, like manipulation. In this blog post, we’ll review key learnings and themes from our explorations in 2022.

Bringing the capabilities of LLMs to robotics

An incredible feature of large language models (LLMs) is their ability to encode descriptions and context into a format that’s understandable by both people and machines. When applied to robotics, LLMs let people task robots more easily — just by asking — with natural language. When combined with vision models and robotics learning approaches, LLMs give robots a way to understand the context of a person’s request and make decisions about what actions should be taken to complete it.

One of the underlying concepts is using LLMs to prompt other pretrained models for information that can build context about what is happening in a scene and make predictions about multimodal tasks. This is similar to the socratic method in teaching, where a teacher asks students questions to lead them through a rational thought process. In “Socratic Models”, we showed that this approach can achieve state-of-the-art performance in zero-shot image captioning and video-to-text retrieval tasks. It also enables new capabilities, like answering free-form questions about and predicting future activity from video, multimodal assistive dialogue, and as we’ll discuss next, robot perception and planning.

In “Towards Helpful Robots: Grounding Language in Robotic Affordances”, we partnered with Everyday Robots to ground the PaLM language model in a robotics affordance model to plan long horizon tasks. In previous machine-learned approaches, robots were limited to short, hard-coded commands, like “Pick up the sponge,” because they struggled with reasoning about the steps needed to complete a task — which is even harder when the task is given as an abstract goal like, “Can you help clean up this spill?”

| With PaLM-SayCan, the robot acts as the language model's "hands and eyes," while the language model supplies high-level semantic knowledge about the task. |

For this approach to work, one needs to have both an LLM that can predict the sequence of steps to complete long horizon tasks and an affordance model representing the skills a robot can actually do in a given situation. In “Extracting Skill-Centric State Abstractions from Value Functions”, we showed that the value function in reinforcement learning (RL) models can be used to build the affordance model — an abstract representation of the actions a robot can perform under different states. This lets us connect long-horizons of real-world tasks, like “tidy the living room”, to the short-horizon skills needed to complete the task, like correctly picking, placing, and arranging items.

Having both an LLM and an affordance model doesn’t mean that the robot will actually be able to complete the task successfully. However, with Inner Monologue, we closed the loop on LLM-based task planning with other sources of information, like human feedback or scene understanding, to detect when the robot fails to complete the task correctly. Using a robot from Everyday Robots, we show that LLMs can effectively replan if the current or previous plan steps failed, allowing the robot to recover from failures and complete complex tasks like "Put a coke in the top drawer," as shown in the video below.

| With PaLM-SayCan, the robot acts as the language model's "hands and eyes," while the language model supplies high-level semantic knowledge about the task. |

An emergent capability from closing the loop on LLM-based task planning that we saw with Inner Monologue is that the robot can react to changes in the high-level goal mid-task. For example, a person might tell the robot to change its behavior as it is happening, by offering quick corrections or redirecting the robot to another task. This behavior is especially useful to let people interactively control and customize robot tasks when robots are working near people.

While natural language makes it easier for people to specify and modify robot tasks, one of the challenges is being able to react in real time to the full vocabulary people can use to describe tasks that a robot is capable of doing. In “Talking to Robots in Real Time”, we demonstrated a large-scale imitation learning framework for producing real-time, open-vocabulary, language-conditionable robots. With one policy we were able to address over 87,000 unique instructions, with an estimated average success rate of 93.5%. As part of this project, we released Language-Table, the largest available language-annotated robot dataset, which we hope will drive further research focused on real-time language-controllable robots.

|

| Examples of long horizon goals reached under real time human language guidance. |

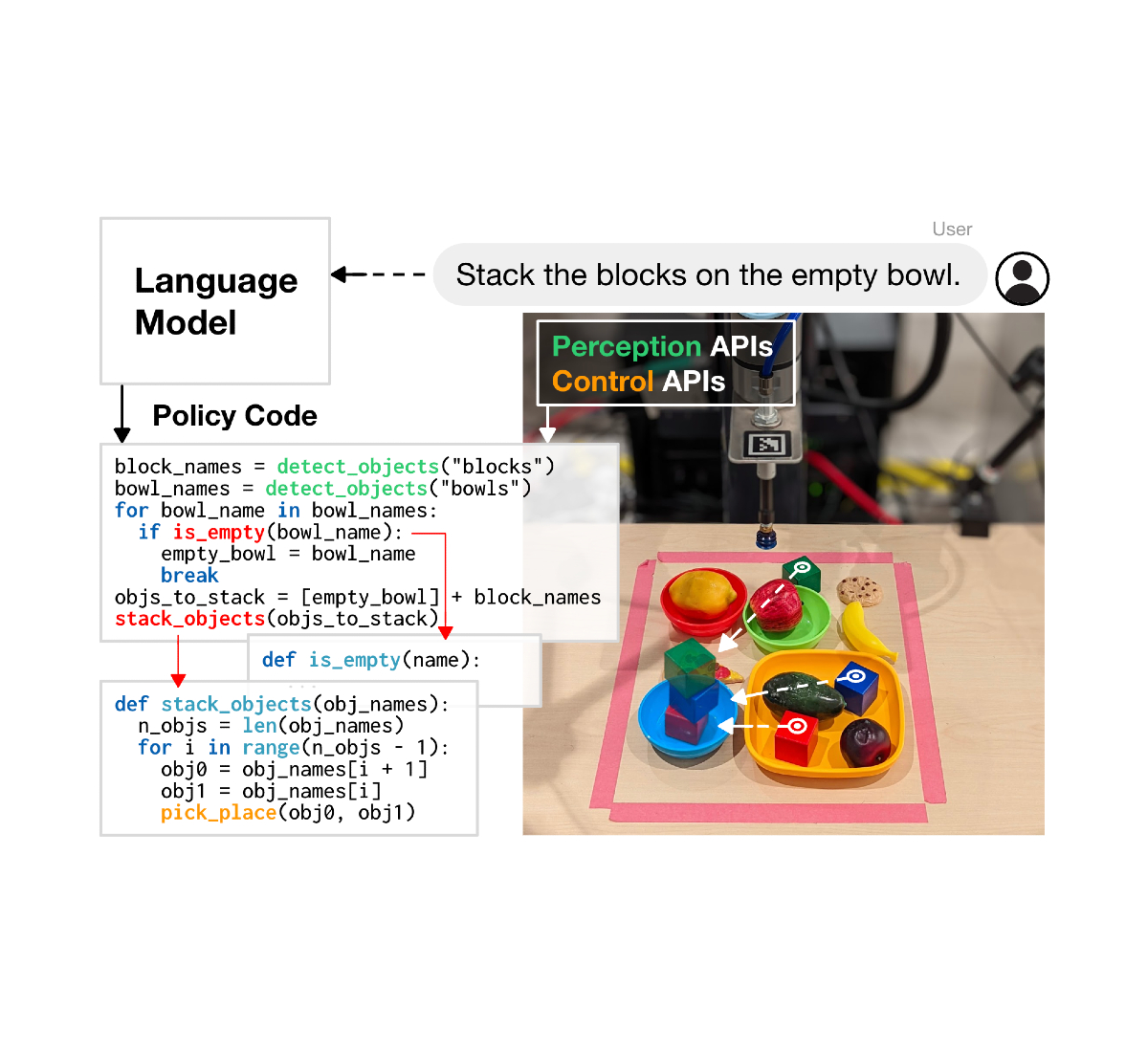

We’re also excited about the potential for LLMs to write code that can control robot actions. Code-writing approaches, like in “Robots That Write Their Own Code”, show promise in increasing the complexity of tasks robots can complete by autonomously generating new code that re-composes API calls, synthesizes new functions, and expresses feedback loops to assemble new behaviors at runtime.

Turning robot learning into a scalable data problem

Large language and multimodal models help robots understand the context in which they’re operating, like what’s happening in a scene and what the robot is expected to do. But robots also need low-level physical skills to complete tasks in the physical world, like picking up and precisely placing objects.

While we often take these physical skills for granted, executing them hundreds of times every day without even thinking, they present significant challenges to robots. For example, to pick up an object, the robot needs to perceive and understand the environment, reason about the spatial relation and contact dynamics between its gripper and the object, actuate the high degrees-of-freedom arm precisely, and exert the right amount of force to stably grasp the object without breaking it. The difficulty of learning these low-level skills is known as Moravec's paradox: reasoning requires very little computation, but sensorimotor and perception skills require enormous computational resources.

Inspired by the recent success of LLMs, which shows that the generalization and performance of large Transformer-based models scale with the amount of data, we are taking a data-driven approach, turning the problem of learning low-level physical skills into a scalable data problem. With Robotics Transformer-1 (RT-1), we trained a robot manipulation policy on a large-scale, real-world robotics dataset of 130k episodes that cover 700+ tasks using a fleet of 13 robots from Everyday Robots and showed the same trend for robotics — increasing the scale and diversity of data improves the model ability to generalize to new tasks, environments, and objects.

|

| Example PaLM-SayCan-RT1 executions of long-horizon tasks in real kitchens. |

Behind both language models and many of our robotics learning approaches, like RT-1, are Transformers, which allow models to make sense of Internet-scale data. Unlike LLMs, robotics is challenged by multimodal representations of constantly changing environments and limited compute. In 2020, we introduced Performers as an approach to make Transformers more computationally efficient, which has implications for many applications beyond robotics. In Performer-MPC, we applied this to introduce a new class of implicit control policies combining the benefits of imitation learning with the robust handling of system constraints from Model Predictive Control (MPC). We show a >40% improvement on the robot reaching its goal and a >65% improvement on social metrics when navigating around humans in comparison to a standard MPC policy. Performer-MPC provides 8 ms latency for the 8.3M parameter model, making on-robot deployment of Transformers practical.

|

| Navigation robot maneuvering through highly constrained spaces using: Regular MPC, Explicit Policy, and Performer-MPC. |

In the last year, our team has shown that data-driven approaches are generally applicable on different robotic platforms in diverse environments to learn a wide range of tasks, including mobile manipulation, navigation, locomotion and table tennis. This shows us a clear path forward for learning low-level robot skills: scalable data collection. Unlike video and text data that is abundant on the Internet, robotic data is extremely scarce and hard to acquire. Finding approaches to collect and efficiently use rich datasets representative of real-world interactions is the key for our data-driven approaches.

Simulation is a fast, safe, and easily parallelizable option, but it is difficult to replicate the full environment, especially physics and human-robot interactions, in simulation. In i-Sim2Real, we showed an approach to address the sim-to-real gap and learn to play table tennis with a human opponent by bootstrapping from a simple model of human behavior and alternating between training in simulation and deploying in the real world. In each iteration, both the human behavior model and the policy are refined.

|

| Learning to play table tennis with a human opponent. |

While simulation helps, collecting data in the real world is essential for fine-tuning simulation policies or adapting existing policies in new environments. While learning, robots are prone to failure, which can cause damage to itself and surroundings — especially in the early stages of learning where they are exploring how to interact with the world. We need to collect training data safely, even while the robot is learning, and enable the robot to autonomously recover from failure. In “Learning Locomotion Skills Safely in the Real World”, we introduced a safe RL framework that switches between a “learner policy” optimized to perform the desired task and a “safe recovery policy” that prevents the robot from unsafe states. In “Legged Robots that Keep on Learning”, we trained a reset policy so the robot can recover from failures, like learning to stand up by itself after falling.

|

|

|

|

| Automatic reset policies enable the robot to continue learning in a lifelong fashion without human supervision. |

While robot data is scarce, videos of people performing different tasks are abundant. Of course, robots aren’t built like people — so the idea of robotic learning from people raises the problem of transferring learning across different embodiments. In “Robot See, Robot Do”, we developed Cross-Embodiment Inverse Reinforcement Learning to learn new tasks by watching people. Instead of trying to replicate the task exactly as a person would, we learn the high-level task objective, and summarize that knowledge in the form of a reward function. This type of demonstration learning could allow robots to learn skills by watching videos readily available on the internet.

We’re also progressing towards making our learning algorithms more data efficient so that we’re not relying only on scaling data collection. We improved the efficiency of RL approaches by incorporating prior information, including predictive information, adversarial motion priors, and guide policies. Further improvements are gained by utilizing a novel structured dynamical systems architecture and combining RL with trajectory optimization, supported by novel solvers. These types of prior information helped alleviate the exploration challenges, served as good regularizers, and significantly reduced the amount of data required. Furthermore, our team has invested heavily in more data-efficient imitation learning. We showed that a simple imitation learning approach, BC-Z, can enable zero-shot generalization to new tasks that were not seen during training. We also introduced an iterative imitation learning algorithm, GoalsEye, which combined Learning from Play and Goal-Conditioned Behavior Cloning for high-speed and high-precision table tennis games. On the theoretical front, we investigated dynamical-systems stability for characterizing the sample complexity of imitation learning, and the role of capturing failure-and-recovery within demonstration data to better condition offline learning from smaller datasets.

Closing

Advances in large models across the field of AI have spurred a leap in capabilities for robot learning. This past year, we’ve seen the sense of context and sequencing of events captured in LLMs help solve long-horizon planning for robotics and make robots easier for people to interact with and task. We’ve also seen a scalable path to learning robust and generalizable robot behaviors by applying a transformer model architecture to robot learning. We continue to open source data sets, like “Scanned Objects: A Dataset of 3D-Scanned Common Household Items”, and models, like RT-1, in the spirit of participating in the broader research community. We’re excited about building on these research themes in the coming year to enable helpful robots.

Acknowledgements

We would like to thank everyone who supported our research. This includes the entire Robotics at Google team, and collaborators from Everyday Robots and Google Research. We also want to thank our external collaborators, including UC Berkeley, Stanford, Gatech, University of Washington, MIT, CMU and U Penn.

Google Research, 2022 & beyond

This was the sixth blog post in the “Google Research, 2022 & Beyond” series. Other posts in this series are listed in the table below:

-

Labels:

- Robotics

- Year in Review