Predicting Properties of Molecules with Machine Learning

April 7, 2017

Posted by George Dahl, Research Scientist, Google Brain Team

Recently there have been many exciting applications of machine learning (ML) to chemistry, particularly in chemical search problems, from drug discovery and battery design to finding better OLEDs and catalysts. Historically, chemists have used numerical approximations to Schrödinger’s equation, such as Density Functional Theory (DFT), in these sorts of chemical searches. However, the computational cost of these approximations limits the size of the search. In the hope of enabling larger searches, several research groups have created ML models to predict chemical properties using training data generated by DFT (e.g. Rupp et al. and Behler and Parrinello). Expanding upon this previous work, we have been applying various modern ML methods to the QM9 benchmark –a public collection of molecules paired with DFT-computed electronic, thermodynamic, and vibrational properties.

We have recently posted two papers describing our research in this area that grew out of a collaboration between the Google Brain team, the Google Accelerated Science team, DeepMind, and the University of Basel. The first paper includes a new featurization of molecules and a systematic assessment of a multitude of machine learning methods on the QM9 benchmark. After trying many existing approaches on this benchmark, we worked on improving the most promising deep neural network models.

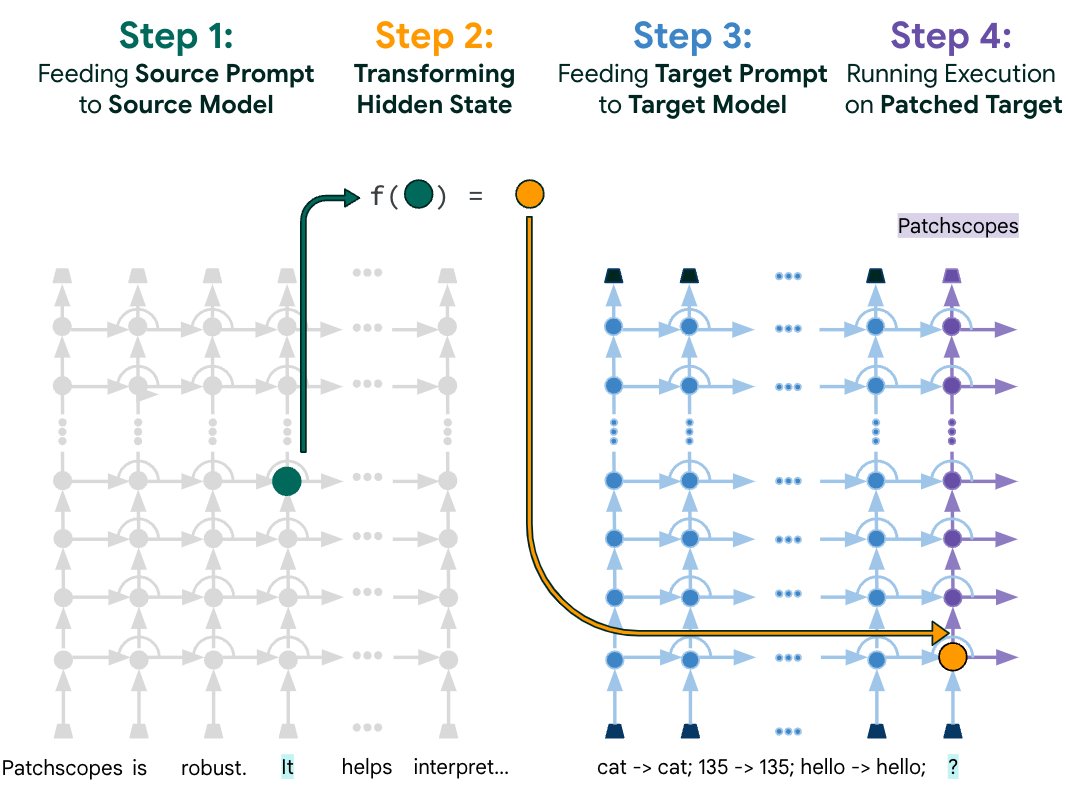

The resulting second paper, “Neural Message Passing for Quantum Chemistry,” describes a model family called Message Passing Neural Networks (MPNNs), which are defined abstractly enough to include many previous neural net models that are invariant to graph symmetries. We developed novel variations within the MPNN family which significantly outperform all baseline methods on the QM9 benchmark, with improvements of nearly a factor of four on some targets.

One reason molecular data is so interesting from a machine learning standpoint is that one natural representation of a molecule is as a graph with atoms as nodes and bonds as edges. Models that can leverage inherent symmetries in data will tend to generalize better — part of the success of convolutional neural networks on images is due to their ability to incorporate our prior knowledge about the invariances of image data (e.g. a picture of a dog shifted to the left is still a picture of a dog). Invariance to graph symmetries is a particularly desirable property for machine learning models that operate on graph data, and there has been a lot of interesting research in this area as well (e.g. Li et al., Duvenaud et al., Kearnes et al., Defferrard et al.). However, despite this progress, much work remains. We would like to find the best versions of these models for chemistry (and other) applications and map out the connections between different models proposed in the literature.

Our MPNNs set a new state of the art for predicting all 13 chemical properties in QM9. On this particular set of molecules, our model can predict 11 of these properties accurately enough to potentially be useful to chemists, but up to 300,000 times faster than it would take to simulate them using DFT. However, much work remains to be done before MPNNs can be of real practical use to chemists. In particular, MPNNs must be applied to a significantly more diverse set of molecules (e.g. larger or with a more varied set of heavy atoms) than exist in QM9. Of course, even with a realistic training set, generalization to very different molecules could still be poor. Overcoming both of these challenges will involve making progress on questions–such as generalization–that are at the heart of machine learning research.

Predicting the properties of molecules is a practically important problem that both benefits from advanced machine learning techniques and presents interesting fundamental research challenges for learning algorithms. Eventually, such predictions could aid the design of new medicines and materials that benefit humanity. At Google, we feel that it’s important to disseminate our research and to help train new researchers in machine learning. As such, we are delighted that the first and second authors of our MPNN paper are Google Brain residents.

-

Labels:

- General Science

- Machine Intelligence