Real-time Continuous Transcription with Live Transcribe

February 4, 2019

Posted by Sagar Savla, Product Manager, Machine Perception

The World Health Organization (WHO) estimates that there are 466 million people globally that are deaf and hard of hearing. A crucial technology in empowering communication and inclusive access to the world's information to this population is automatic speech recognition (ASR), which enables computers to detect audible languages and transcribe them into text for reading. Google's ASR is behind automated captions in Youtube, presentations in Slides and also phone calls. However, while ASR has seen multiple improvements in the past couple of years, the deaf and hard of hearing still mainly rely on manual-transcription services like CART in the US, Palantypist in the UK, or STTR in other countries. These services can be prohibitively expensive and often require to be scheduled far in advance, diminishing the opportunities for the deaf and hard of hearing to participate in impromptu conversations as well as social occasions. We believe that technology can bridge this gap and empower this community.

Today, we're announcing Live Transcribe, a free Android service that makes real-world conversations more accessible by bringing the power of automatic captioning into everyday, conversational use. Powered by Google Cloud, Live Transcribe captions conversations in real-time, supporting over 70 languages and more than 80% of the world's population. You can launch it with a single tap from within any app, directly from the accessibility icon on the system tray.

Building Live Transcribe

Previous ASR-based transcription systems have generally required compute-intensive models, exhaustive user research and expensive access to connectivity, all which hinder the adoption of automated continuous transcription. To address these issues and ensure reasonably accurate real-time transcription, Live Transcribe combines the results of extensive user experience (UX) research with seamless and sustainable connectivity to speech processing servers. Furthermore, we needed to ensure that connectivity to these servers didn't cause our users excessive data usage.

Relying on cloud ASR provides us greater accuracy, but we wanted to reduce the network data consumption that Live Transcribe requires. To do this, we implemented an on-device neural network-based speech detector, built on our previous work with AudioSet. This network is an image-like model, similar to our published VGGish model, which detects speech and automatically manages network connections to the cloud ASR engine, minimizing data usage over long periods of use.

User Experience

To make Live Transcribe as intuitive as possible, we partnered with Gallaudet University to kickstart user experience research collaborations that would ensure core user needs were satisfied while maximizing the potential of our technologies. We considered several different modalities, computers, tablets, smartphones, and even small projectors, iterating ways to display auditory information and captions. In the end, we decided to focus on the smartphone form factor because of the sheer ubiquity of these devices and the increasing capabilities they have.

Once this was established, we needed to address another important issue: displaying transcription confidence. Traditionally considered to be helpful to the user, our research explored whether we actually needed to show word-level or phrase-level confidence.

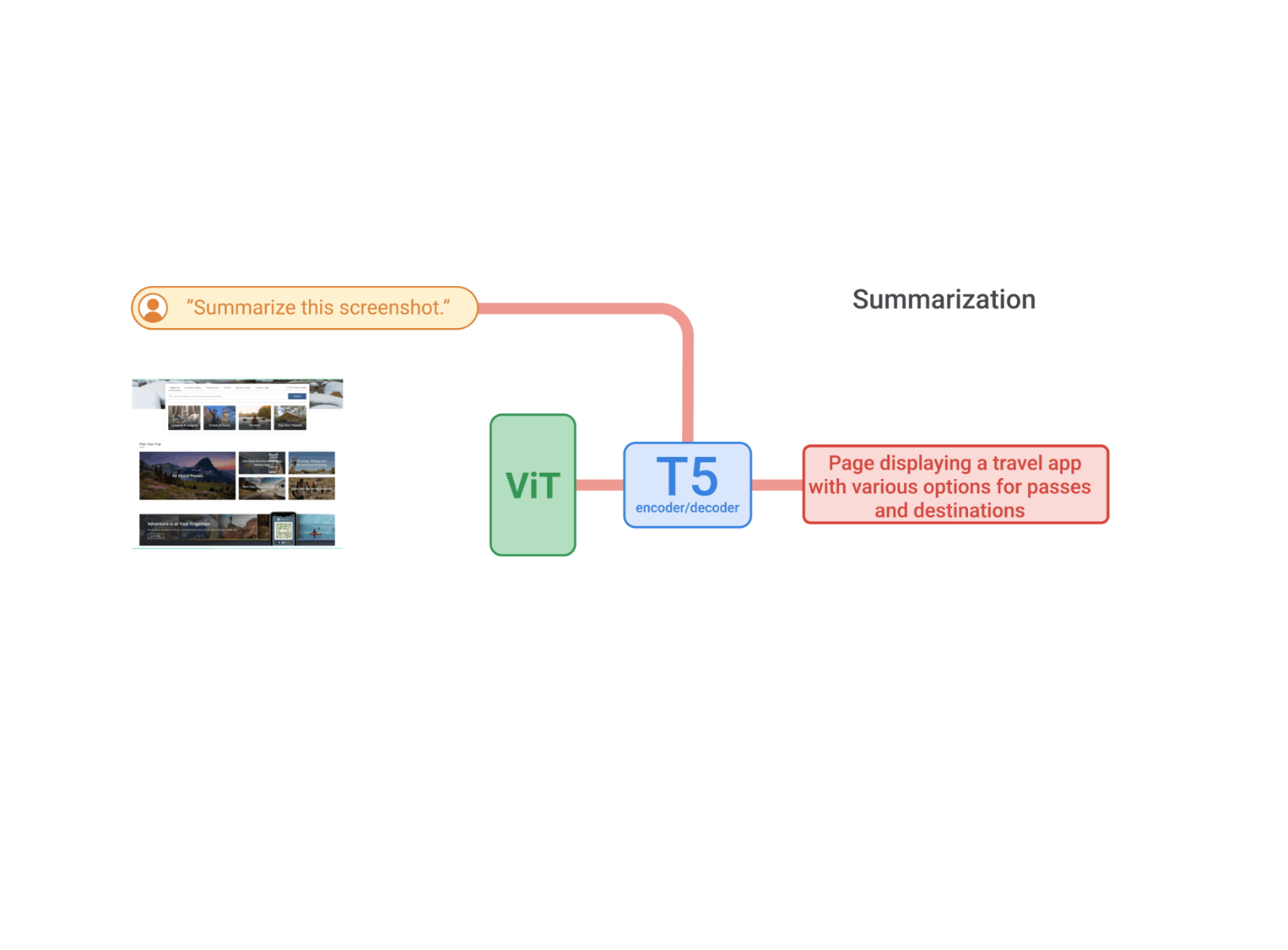

|

| Displaying confidence level of the transcription. Yellow is high confidence, green is medium and blue is low confidence. White is fresh text awaiting context before finalizing. On the left, the coloring is at a per-phrase level while on the right is at a per-word level.1 Research found them to be distracting to the user without providing conversational value. |

Another useful UX signal is the noise level of their current environment. Known as the cocktail party problem, understanding a speaker in a noisy room is a major challenge for computers. To address this, we built an indicator that visualizes the volume of user speech relative to background noise. This also gives users instant feedback on how well the microphone is receiving the incoming speech from the speaker, allowing them to adjust the placement of the phone.

Potential future improvements in mobile-based automatic speech transcription include on-device recognition, speaker-separation, and speech enhancement. Relying solely on transcription can have pitfalls that can lead to miscommunication. Our research with Gallaudet University shows that combining it with other auditory signals like speech detection and a loudness indicator, makes a tangibly meaningful change in communication options for our users.

Live Transcribe is now available in a staged rollout on the Play Store, and is pre-installed on all Pixel 3 devices with the latest update. Live Transcribe can then be enabled via the Accessibility Settings. You can also read more about it on The Keyword.

Acknowledgements

Live Transcribe was made by researchers Chet Gnegy, Dimitri Kanevsky, and Justin S. Paul in collaboration with Android Accessibility team members Brian Kemler, Thomas Lin, Alex Huang, Jacqueline Huang, Ben Chung, Richard Chang, I-ting Huang, Jessie Lin, Ausmus Chang, Weiwei Wei, Melissa Barnhart and Bingying Xia. We'd also like to thank our close partners from Gallaudet University, Christian Vogler, Norman Williams and Paula Tucker.

1 Eagle-eyed readers can see the phrase level confidence mode in use by Dr. Obeidat in the video above.↩