Optimizing Multiple Loss Functions with Loss-Conditional Training

April 27, 2020

Posted by Alexey Dosovitskiy, Research Scientist, Google Research

In many machine learning applications the performance of a model cannot be summarized by a single number, but instead relies on several qualities, some of which may even be mutually exclusive. For example, a learned image compression model should minimize the compressed image size while maximizing its quality. It is often not possible to simultaneously optimize all the values of interest, either because they are fundamentally in conflict, like the image quality and the compression ratio in the example above, or simply due to the limited model capacity. Hence, in practice one has to decide how to balance the values of interest.

If one needs to cover different trade-offs between model qualities (e.g. image quality vs compression rate), the standard practice is to train several separate models with different coefficients in the loss function of each. This requires keeping around multiple models both during training and inference, which is very inefficient. However, all of these separate models solve very related problems, suggesting that some information could be shared between them.

In two concurrent papers accepted at ICLR 2020, we propose a simple and broadly applicable approach that avoids the inefficiency of training multiple models for different loss trade-offs and instead uses a single model that covers all of them. In “You Only Train Once: Loss-Conditional Training of Deep Networks”, we give a general formulation of the method and apply it to several tasks, including variational autoencoders and image compression, while in “Adjustable Real-time Style Transfer”, we dive deeper into the application of the method to style transfer.

Loss-Conditional Training

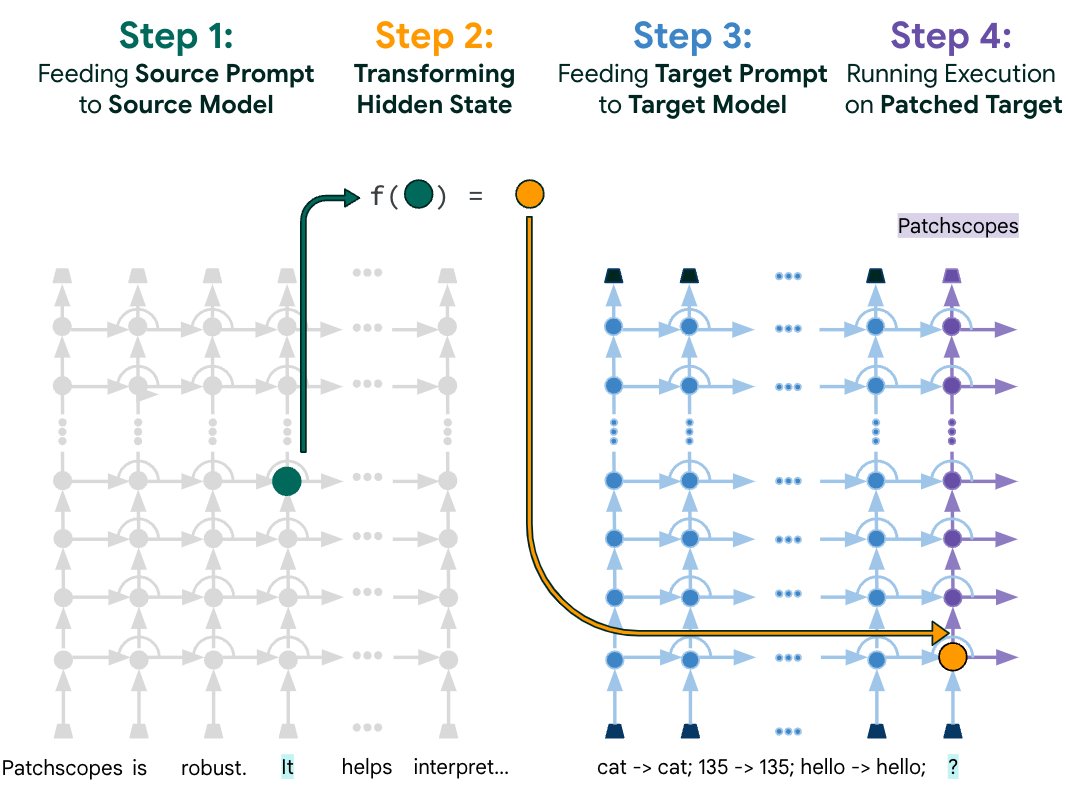

The idea behind our approach is to train a single model that covers all choices of coefficients of the loss terms, instead of training a model for each set of coefficients. We achieve this by (i) training the model on a distribution of losses instead of a single loss function, and (ii) conditioning the model outputs on the vector of coefficients of the loss terms. This way, at inference time the conditioning vector can be varied, allowing us to traverse the space of models corresponding to loss functions with different coefficients.

This training procedure is illustrated in the diagram below for the style transfer task. For each training example, first the loss coefficients are randomly sampled. Then they are used both to condition the main network via the conditioning network and to compute the loss. The whole system is trained jointly end-to-end, i.e., the model parameters are trained concurrently with random sampling of loss functions.

Application: Variable-Rate Image Compression

As a first example application of our approach, we show the results for learned image compression. When compressing an image, a user should be able to choose the desired trade-off between the image quality and the compression rate. Classic image compression algorithms are designed to allow for this choice. Yet, many leading learned compression methods require training a separate model for each such trade-off, which is computationally expensive both at training and at inference time. For problems such as this, where one needs a set of models optimized for different losses, our method offers a simple way to avoid inefficiency and cover all trade-offs with a single model.

We apply the loss-conditional training technique to the learned image compression model of Balle et al. The loss function here consists of two terms, a reconstruction term responsible for the image quality and a compactness term responsible for the compression rate. As illustrated below, our technique allows training a single model covering a wide range of quality-compression tradeoffs.

|

| Compression at different quality levels with a single model. All animations are generated with a single model by varying the conditioning value. |

The second application we demonstrate is artistic style transfer, in which one synthesizes an image by merging the content from one image and the style from another. Recent methods allow training deep networks that stylize images in real time and in multiple styles. However, for each given style these methods do not allow the user to have control over the details of the synthesized output, for instance, how much to stylize the image and on which style features to place greater emphasis. If the stylized output is not appealing to the user, they have to train multiple models with different hyper-parameters until they get a favorite stylization.

Our proposed method instead allows training a single model covering a wide range of stylization variants. In this task, we condition the model on a loss function, which has coefficients corresponding to five loss terms, including the content loss and four terms for the stylization loss. Intuitively, the content loss regulates how much the stylized image should be similar to the original content, while the four stylization losses define which style features get carried over to the final stylized image. Below we show the outputs of our single model when varying all these coefficients:

|

| Adjustable style transfer. All stylizations are generated with a single network by varying the conditioning values. |

Conclusion

We have proposed loss-conditional training, a simple and general method that allows training a single deep network for tasks that would formerly require a large set of separately trained networks. While we have shown its application to image compression and style transfer, many more applications are possible — whenever the loss function has coefficients to be tuned, our method allows training a single model covering a wide range of these coefficients.

Acknowledgements

This blog post covers the work by multiple researchers on the Google Brain team: Mohammad Babaeizadeh, Johannes Balle, Josip Djolonga, Alexey Dosovitskiy, and Golnaz Ghiasi. This blog post would not be possible without crucial contributions from all of them. Images from the MS-COCO dataset and from unsplash.com are used for illustrations.

-

Labels:

- Generative AI

- Machine Intelligence