Announcing the 7th Fine-Grained Visual Categorization Workshop

May 20, 2020

Posted by Christine Kaeser-Chen, Software Engineer and Serge Belongie, Visiting Faculty, Google Research

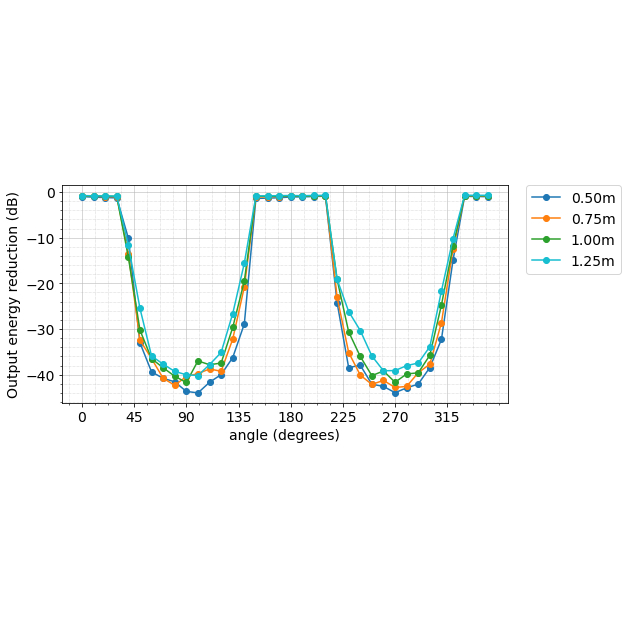

Fine-grained visual categorization refers to the problem of distinguishing between images of closely related entities, e.g., a monarch butterfly (Danaus plexippus) from a viceroy (Limenitis archippus). At the time of the first FGVC workshop in 2011, very few fine-grained datasets existed, and the ones that were available (e.g., the CUB dataset of 200 bird species, launched at that workshop) presented a formidable challenge to the leading classification algorithms of the time. Fast forward to 2020, and the computer vision landscape has undergone breathtaking changes. Deep learning based methods helped CUB-200-2011 accuracy rocket from 17% to 90% and fine-grained datasets have proliferated, with data arriving from a diverse array of institutions, such as art museums, apparel retailers, and cassava farms.

In order to help support even further progress in this field, we are excited to sponsor and co-organize the 7th Workshop on Fine-Grained Visual Categorization (FGVC7), which will take place as a virtual gathering on June 19, 2020, in conjunction with the IEEE conference on Computer Vision and Pattern Recognition (CVPR). We’re excited to highlight this year’s world-class lineup of fine-grained challenges, ranging from fruit tree disease prediction to fashion attributes, and we invite computer vision researchers from across the world to participate in the workshop.

|

| The FGVC workshop at CVPR 2020 focuses on subordinate categories, including (from left to right) wildlife camera traps, plant pathology, birds, herbarium sheets, apparel, and museum artifacts. |

In addition to pushing the frontier of fine-grained recognition on ever more challenging datasets, each FGVC workshop cycle provides opportunities for fostering new collaborations between researchers and practitioners. Some of the efforts from the FGVC workshop have made the leap into the hands of real world users.

The 2018 FGVC workshop hosted a Fungi challenge with data for 1,500 mushroom species provided by the Danish Mycological Society. When the competition concluded, the leaderboard was topped by a team from Czech Technical University and the University of West Bohemia.

The mycologists subsequently invited the Czech researchers for a visit to Copenhagen to explore further collaboration and field test a new workflow for collaborative machine learning research in biodiversity. This resulted in a jointly authored conference paper, a mushroom recognition app for Android and iOS, and an open access model published on TensorFlow Hub.

|

| The Svampeatlas app for mushroom recognition is a result of a Danish-Czech collaboration spun out of the FGVC 2018 Fungi challenge. The underlying model is now published on TF Hub. Images used with permission of the Danish Mycological Society. |

|

| Examples of cassava leaf disease represented in the 2019 iCassava challenge. |

This Year’s Challenges

FGVC7 will feature six challenges, four of which represent sequels to past offerings, and two of which are brand new.

In iWildCam, the challenge is to identify different species of animals in camera trap images. Like its predecessors in 2018 and 2019, this year’s competition makes use of data from static, motion-triggered cameras used by biologists to study animals in the wild. Participants compete to build models that address diverse regions from around the globe, with a focus on generalization to held-out camera deployments within those regions, which exhibit differences in device model, image quality, local environment, lighting conditions, and species distributions, making generalization difficult.

It has been shown that species classification performance can be dramatically improved by using information beyond the image itself. In addition, since an ecosystem can be monitored in a variety of ways (e.g., camera traps, citizen scientists, remote sensing), each of which has its own strengths and limitations, it is important to facilitate the exploration of techniques for combining these complementary modalities. To this end, the competition provides a time series of remote sensing imagery for each camera trap location, as well as images from the iNaturalist competition datasets for species in the camera trap data.

Herbarium Challenge, now in its second year, entails plant species identification, based on a large, long-tailed collection of herbarium specimens. Developed in collaboration with the New York Botanical Garden (NYBG), this challenge features over 1 million images representing over 32,000 plant species. Last year’s challenge was based on 46,000 specimens for 680 species. Being able to recognize species from historical herbarium collections can not only help botanists better understand changes in plant life on our planet, but also offers a unique opportunity to identify previously undescribed new species in the collection.

|

| Representative examples of specimens from the 2020 Herbarium challenge. Images used with permission of the New York Botanical Garden. |

The last of the sequels is iMet, in which participants are challenged with building algorithms for fine-grained attribute classification on works of art. Developed in collaboration with the Metropolitan Museum of Art, the dataset has grown significantly since the 2019 edition, with a wide array of new cataloguing information generated by subject matter experts including multiple object classifications, artist, title, period, date, medium, culture, size, provenance, geographic location, and other related museum objects within the Met’s collection.

Semi-Supervised Aves is one of the new challenges at this year’s workshop. While avian data from iNaturalist has featured prominently in past FGVC challenges, this challenge focuses on the problem of learning from partially labeled data, a form of semi-supervised learning. The dataset is designed to expose some of the challenges encountered in realistic settings, such as the fine-grained similarity between classes, significant class imbalance, and domain mismatch between the labeled and unlabeled data.

Rounding out the set of challenges is Plant Pathology. In this challenge, the participants attempt to spot foliar diseases of apples using a reference dataset of expert-annotated diseased specimens. While this particular challenge is new to the FGVC community, it is the second such challenge to involve plant disease, the first being iCassava at last year’s FGVC.

Invitation to Participate

The results of these competitions will be presented at the FGVC7 workshop by top performing teams. We invite researchers, practitioners, and domain experts to participate in the FGVC workshop to learn more about state-of-the-art advances in fine-grained image recognition. We are excited to encourage the community's development of cutting edge algorithms for fine-grained visual categorization and foster new collaborations with global impact!

Acknowledgements

We’d like to thank our colleagues and friends on the FGVC7 organizing committee for working together to advance this important area. At Google we would like to thank Hartwig Adam, Kiat Chuan Tan, Arvi Gjoka, Kimberly Wilber, Sara Beery, Mikhail Sirotenko, Denis Brulé, Timnit Gebru, Ernest Mwebaze, Wojciech Sirko, Maggie Demkin.