AutoFlip: An Open Source Framework for Intelligent Video Reframing

February 13, 2020

Posted by Nathan Frey, Senior Software Engineer, Google Research, Los Angeles and Zheng Sun, Senior Software Engineer, Google Research, Mountain View

Videos filmed and edited for television and desktop are typically created and viewed in landscape aspect ratios (16:9 or 4:3). However, with an increasing number of users creating and consuming content on mobile devices, historical aspect ratios don’t always fit the display being used for viewing. Traditional approaches for reframing video to different aspect ratios usually involve static cropping, i.e., specifying a camera viewport, then cropping visual contents that are outside. Unfortunately, these static cropping approaches often lead to unsatisfactory results due to the variety of composition and camera motion styles. More bespoke approaches, however, typically require video curators to manually identify salient contents on each frame, track their transitions from frame-to-frame, and adjust crop regions accordingly throughout the video. This process is often tedious, time-consuming, and error-prone.

To address this problem, we are happy to announce AutoFlip, an open source framework for intelligent video reframing. AutoFlip is built on top of the MediaPipe framework that enables the development of pipelines for processing time-series multimodal data. Taking a video (casually shot or professionally edited) and a target dimension (landscape, square, portrait, etc.) as inputs, AutoFlip analyzes the video content, develops optimal tracking and cropping strategies, and produces an output video with the same duration in the desired aspect ratio.

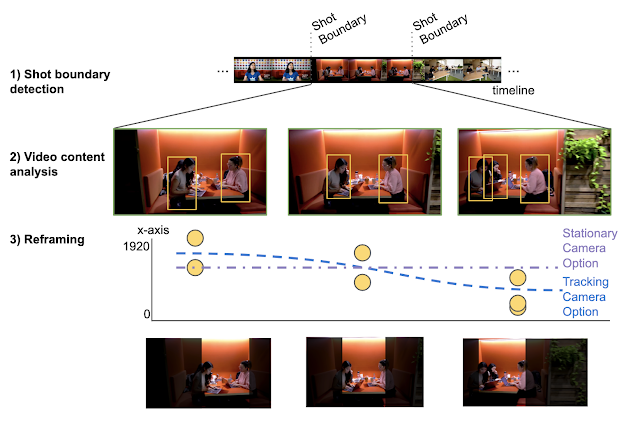

AutoFlip provides a fully automatic solution to smart video reframing, making use of state-of-the-art ML-enabled object detection and tracking technologies to intelligently understand video content. AutoFlip detects changes in the composition that signify scene changes in order to isolate scenes for processing. Within each shot, video analysis is used to identify salient content before the scene is reframed by selecting a camera mode and path optimized for the contents.

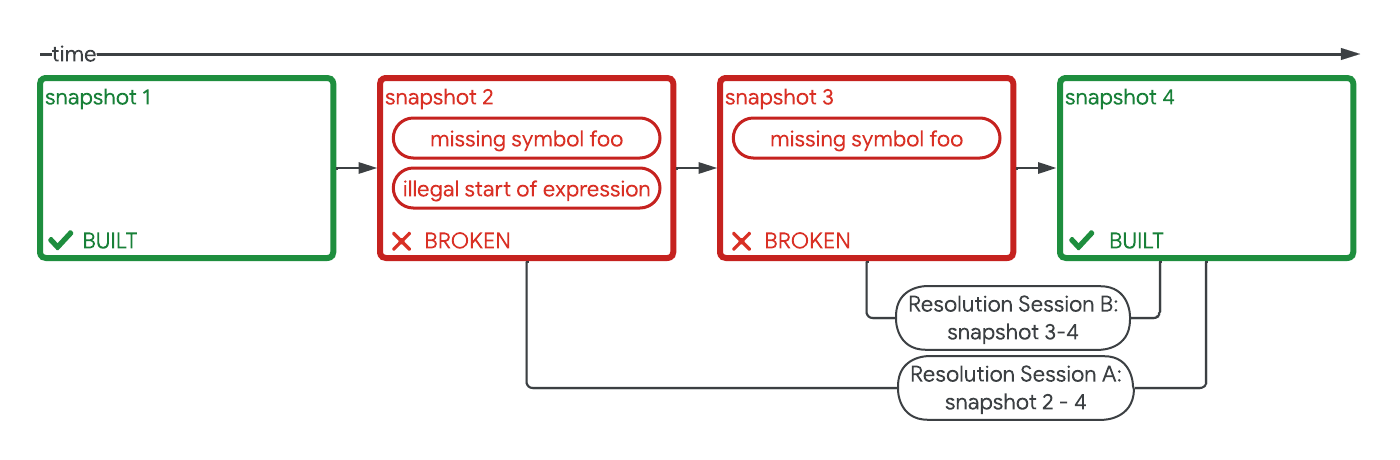

Shot (Scene) Detection

A scene or shot is a continuous sequence of video without cuts (or jumps). To detect the occurrence of a shot change, AutoFlip computes the color histogram of each frame and compares this with prior frames. If the distribution of frame colors changes at a different rate than a sliding historical window, a shot change is signaled. AutoFlip buffers the video until the scene is complete before making reframing decisions, in order to optimize the reframing for the entire scene.

Video Content Analysis

We utilize deep learning-based object detection models to find interesting, salient content in the frame. This content typically includes people and animals, but other elements may be identified, depending on the application, including text overlays and logos for commercials, or motion and ball detection for sports.

The face and object detection models are integrated into AutoFlip through MediaPipe, which uses TensorFlow Lite on CPU. This structure allows AutoFlip to be extensible, so developers may conveniently add new detection algorithms for different use cases and video content. Each object type is associated with a weight value, which defines its relative importance — the higher the weight, the more influence the feature will have when computing the camera path.

|

| Left: People detection on sports footage. Right: Two face boxes (‘core’ and ‘all’ face landmarks). In narrow portrait crop cases, often only the core landmark box can fit. |

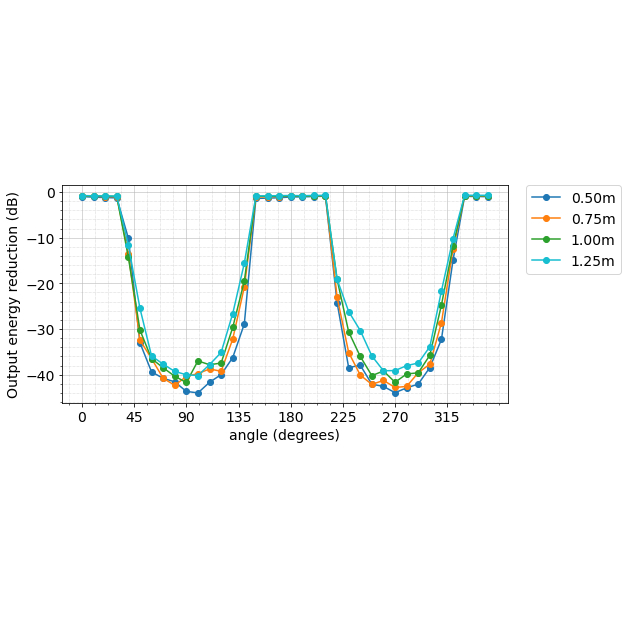

After identifying the subjects of interest on each frame, logical decisions about how to reframe the content for a new view can be made. AutoFlip automatically chooses an optimal reframing strategy — stationary, panning or tracking — depending on the way objects behave during the scene (e.g., moving around or stationary). In stationary mode, the reframed camera viewport is fixed in a position where important content can be viewed throughout the majority of the scene. This mode can effectively mimic professional cinematography in which a camera is mounted on a stationary tripod or where post-processing stabilization is applied. In other cases, it is best to pan the camera, moving the viewport at a constant velocity. The tracking mode provides continuous and steady tracking of interesting objects as they move around within the frame.

Based on which of these three reframing strategies the algorithm selects, AutoFlip then determines an optimal cropping window for each frame, while best preserving the content of interest. While the bounding boxes track the objects of focus in the scene, they typically exhibit considerable jitter from frame-to-frame and, consequently, are not sufficient to define the cropping window. Instead, we adjust the viewport on each frame through the process of Euclidean-norm optimization, in which we minimize the residuals between a smooth (low-degree polynomial) camera path and the bounding boxes.

|

| Top: Camera paths resulting from following the bounding boxes from frame-to-frame. Bottom: Final smoothed camera paths generated using Euclidean-norm path formation. Left: Scene in which objects are moving around, requiring a tracking camera path. Right: Scene where objects stay close to the same position; a stationary camera covers the content for the full duration of the scene. |

AutoFlip Use Cases

We are excited to release this tool directly to developers and filmmakers, reducing the barriers to their design creativity and reach through the automation of video editing. The ability to adapt any video format to various aspect ratios is becoming increasingly important as the diversity of devices for video content consumption continues to rapidly increase. Whether your use case is portrait to landscape, landscape to portrait, or even small adjustments like 4:3 to 16:9, AutoFlip provides a solution for intelligent, automated and adaptive video reframing.

What’s Next?

Like any machine learning algorithm, AutoFlip can benefit from an improved ability to detect objects relevant to the intent of the video, such as speaker detection for interviews or animated face detection on cartoons. Additionally, a common issue arises when input video has important overlays on the edges of the screen (such as text or logos) as they will often be cropped from the view. By combining text/logo detection and image inpainting technology, we hope that future versions of AutoFlip can reposition foreground objects to better fit the new aspect ratios. Lastly, in situations where padding is required, deep uncrop technology could provide improved ability to expand beyond the original viewable area.

While we work to improve AutoFlip internally at Google, we encourage contributions from developers and filmmakers in the open source communities.

Acknowledgments

We would like to thank our colleagues who contributed to Autoflip, Alexander Panagopoulos, Jenny Jin, Brian Mulford, Yuan Zhang, Alex Chen, Xue Yang, Mickey Wang, Justin Parra, Hartwig Adam, Jingbin Wang, and Weilong Yang; MediaPipe team who helped with open sourcing, Jiuqiang Tang, Tyler Mullen, Mogan Shieh, Ming Guang Yong, and Chuo-Ling Chang.